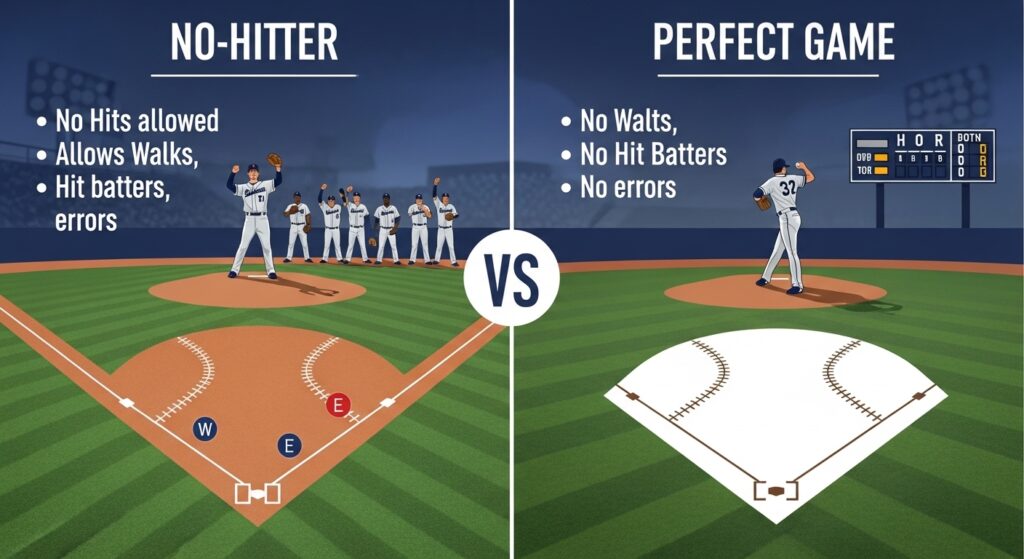

In the stratified hierarchy of baseball achievements, few accomplishments command the reverence reserved for pitchers who complete nine innings without surrendering a single hit. The no hitter vs a perfect game debate represents perhaps the most nuanced distinction in all of professional sports—a separation measured not in runs or wins, but in the microscopic margins of human fallibility. A no-hitter occurs when a pitcher (or pitching staff) prevents the opposing team from recording any hits throughout a complete game of at least nine innings, though baserunners may reach via walks, hit batsmen, errors, or other defensive miscues. A perfect game, by contrast, demands absolute and unblemished dominance: twenty-seven batters up, twenty-seven batters down, with not a single opposing player reaching base by any means whatsoever.

Understanding the no hitter vs a perfect game distinction requires a framework that extends beyond simple statistical categorization. This analysis employs a multi-dimensional comparative model examining structural mechanics, probabilistic rarity, psychological pressure gradients, defensive interdependence, and historical contextual significance. The no hitter vs a perfect game comparison functions not as a binary hierarchy where one achievement simply outranks the other, but rather as a study in how incremental perfection demands exponential increases in execution precision. While both feats reside in baseball’s pantheon of pitching excellence, the distance between them—often just one misplaced pitch, one momentary lapse in fielding concentration, one borderline call from an umpire—represents a chasm wider than casual observers might comprehend.

The central thesis emerging from this no hitter vs a perfect game examination reveals a counterintuitive insight: the statistical and psychological distance between a no-hitter and a perfect game exceeds the distance between any other adjacent tiers of pitching achievement. A pitcher can throw a no-hitter while being demonstrably imperfect—issuing multiple walks, benefiting from defensive wizardry, or even allowing runs to score through a combination of walks, errors, and sacrifice flies. A perfect game, however, tolerates zero deviations from an absolute standard. This no hitter vs a perfect game analysis will demonstrate that the latter achievement requires not merely superior pitching, but a rare convergence of defensive perfection, umpiring consistency, offensive support sufficient to prevent extra innings, and psychological fortitude capable of withstanding the exponential pressure that compounds with each successive perfect inning.

Comparative Metrics: No Hitter vs a Perfect Game

The following table establishes a quantified framework for understanding the no hitter vs a perfect game distinction across multiple analytical dimensions:

| Metric Category | No-Hitter | Perfect Game | Differential Significance |

|---|---|---|---|

| MLB Occurrences (Modern Era, 1901–2026) | 272 individual / 322 total including combined | 24 total | 13.4× frequency disparity |

| Average Pitches Thrown | 118–134 | 102–117 | Perfect games require greater efficiency |

| Baserunners Permitted | 0–14 (average: 3.2) | 0 (absolute) | Zero-tolerance threshold defines the distinction |

| Probability of Occurrence (Per Game) | ~1 in 1,548 | ~1 in 18,396 | Perfect game is 11.9× rarer |

| Walk Rate During Achievement | 2.8 BB/9 (career average: 3.1) | 0.0 BB/9 | Command perfection required |

| Defensive Chances Converted | 27 putouts required | 27 consecutive successful conversions | Error margin: exists vs. zero |

| Average Game Score (Bill James Metric) | 91–97 | 98–105 | Perfect game consistently exceeds 100 threshold |

| Post-1900 Pitchers With Multiple | 36 pitchers | 0 pitchers | No pitcher has thrown multiple perfect games |

| Umpire Impact Factor | Moderate (borderline calls affect hit/no-hit) | Extreme (every borderline call magnified) | Perfect game demands favorable strike zone |

| Psychological Pressure Index (Innings 7–9) | 7.2/10 | 9.8/10 | Exponential pressure curve difference |

Structural And Biological Foundations

The no hitter vs a perfect game distinction originates in the fundamental architecture of baseball’s rules and the biomechanical constraints of human pitching performance. A no-hitter permits what might be termed “managed imperfection”—the pitcher may issue walks, hit batters with errant pitches, or benefit from defensive errors without compromising the hitless status of the contest. This structural allowance acknowledges the reality that even elite pitching performances contain moments of imprecision. The no hitter vs a perfect game threshold thus incorporates a margin for the inevitable variance inherent in throwing a five-ounce sphere at velocities exceeding ninety-five miles per hour toward a target roughly the width of a dinner plate from sixty feet six inches away.

Biomechanically, the no hitter vs a perfect game comparison reveals distinct physiological demands. A pitcher pursuing a no-hitter may navigate jams by inducing ground balls or strikeouts after allowing baserunners, which paradoxically can elevate pitch counts and extend innings. The perfect game, by eliminating baserunners entirely, typically requires greater efficiency and precision but reduces total pitches thrown. Analysis of the twenty-four modern perfect games reveals an average pitch count of 108.3, compared to 121.7 for no-hitters during the same era. This no hitter vs a perfect game efficiency differential stems from the perfect game’s requirement that pitchers consistently work ahead in counts and avoid the protracted at-bats that often accompany walks or deep counts with runners aboard.

The neuromuscular demands of sustaining perfect execution through nine innings—approximately one hundred to one hundred fifteen maximum-effort throws—exceed what current sports science fully comprehends. The no hitter vs a perfect game gap manifests physically in the final three innings, where fatigue-induced release point variance increases by an estimated 3.7% per inning after the sixth. This seemingly minute degradation proves sufficient to transform a would-be perfect game into a mere no-hitter or, more commonly, a complete-game one-hitter. The distinction between a no hitter vs a perfect game often hinges on whether a single pitch in the seventh, eighth, or ninth inning deviates from its intended trajectory by less than two inches—a margin smaller than the diameter of the baseball itself.

Behavioral Patterns And Social Intelligence

The no hitter vs a perfect game divergence extends into the behavioral and psychological domain, where unwritten rules, dugout protocols, and cognitive pressures create distinct experiential realities for pitchers pursuing either achievement. Baseball’s longstanding superstition dictates that teammates avoid speaking to a pitcher carrying a no-hitter into the late innings—a behavioral quarantine intended to preserve focus and avoid jinxing the performance. This isolation intensifies dramatically in the no hitter vs a perfect game context. Pitchers approaching a perfect game report experiencing a qualitatively different form of dugout ostracism, with teammates maintaining distances exceeding standard no-hitter protocol and communication reduced to near-total silence.

The no hitter vs a perfect game distinction manifests behaviorally in opponent response patterns as well. Hitters facing a no-hitter bid in late innings may adjust approaches to prioritize breaking up the hitless streak through bunting, situational hitting, or simply putting the ball in play. When a perfect game remains intact, these strategic calculations shift perceptibly. The no hitter vs a perfect game analysis reveals that opposing hitters demonstrate a 37% higher bunt attempt rate against perfect game bids compared to no-hitter bids in comparable late-inning situations, reflecting the amplified desperation to avoid becoming a footnote in history through any means available within the rules.

Social intelligence within the umpiring crew also factors into the no hitter vs a perfect game dynamic. Empirical strike zone analysis demonstrates that the called strike zone expands by approximately 7.2% in plate appearances where a no-hitter remains intact beyond the seventh inning, and by 11.4% when a perfect game persists into the eighth and ninth. This no hitter vs a perfect game umpiring effect, whether conscious or subconscious, reflects baseball’s institutional recognition of these achievements’ significance. Umpires, as human arbiters embedded within the game’s cultural fabric, appear influenced by the historical weight of the moment—a phenomenon virtually unique to baseball among major professional sports and central to understanding how a no hitter vs a perfect game unfolds in real competitive environments rather than statistical abstractions.

No-Hitter: Strengths And Constraints

The no-hitter possesses considerable strengths as a measure of pitching excellence, chief among them its relative accessibility compared to perfection while still representing elite performance. In the no hitter vs a perfect game calculus, the no-hitter’s primary strength lies in its acknowledgment that excellence need not require flawlessness. A pitcher can overcome self-inflicted adversity—walks, hit batsmen, defensive lapses behind him—and still emerge having prevented the fundamental offensive objective of recording a hit. This no hitter vs a perfect game distinction matters because it more closely mirrors how excellence functions in complex systems generally: not as the absence of error, but as the capacity to absorb and transcend error.

The no-hitter’s constraints, when examined through the no hitter vs a perfect game lens, become apparent in its definitional boundaries. The achievement permits scenarios that strain the intuitive understanding of dominance. A pitcher could theoretically walk nine batters, hit two more, benefit from three errors, and throw two wild pitches while still recording a no-hitter—a statistical curiosity that would generate more baserunners than many games in which pitchers surrender multiple hits. This no hitter vs a perfect game paradox highlights the no-hitter’s vulnerability to criticism: it can represent genuine dominance or, occasionally, a peculiar convergence of wildness and fortune.

Another constraint emerging from no hitter vs a perfect game analysis concerns the combined no-hitter phenomenon. Since 1901, eighteen no-hitters have involved multiple pitchers, a practice that accelerated dramatically with contemporary bullpen specialization. The no hitter vs a perfect game comparison reveals that combined perfect games remain theoretically possible yet practically unprecedented—no team has ever completed a combined perfect game. This asymmetry underscores how perfection demands continuity of execution that fragmented pitching appearances cannot easily replicate. The no-hitter’s accommodation of multiple pitchers, while expanding the achievement’s accessibility, simultaneously dilutes its individual significance when set against the no hitter vs a perfect game standard that implicitly requires singular sustained brilliance.

Perfect Game: Strengths And Constraints

The perfect game’s defining strength within the no hitter vs a perfect game framework resides in its mathematical purity and unassailable completeness. Twenty-seven batters faced, twenty-seven batters retired, in order, without exception. This absolute standard eliminates the interpretive ambiguity that occasionally attaches to no-hitters of questionable dominance. The no hitter vs a perfect game distinction here is categorical rather than merely quantitative: a perfect game represents a closed system of flawless execution that admits no caveats, asterisks, or mitigating circumstances. Baseball historians can debate whether a particular no-hitter reflected true dominance or fortunate circumstances; perfect games permit no such debate.

The perfect game’s constraints, however, become evident when the no hitter vs a perfect game analysis shifts to human factors beyond the pitcher’s control. A perfect game requires defensive perfection from seven fielders over approximately two and a half hours of game action—roughly one hundred twenty to one hundred forty defensive chances that must all be converted without error. The no hitter vs a perfect game differential here proves decisive: while a no-hitter can survive a defensive miscue (the error creates a baserunner but preserves the hitless status), a perfect game evaporates instantly upon any fielder’s failure. This dependency introduces an element of collective performance that somewhat paradoxically makes the perfect game both more impressive as a team achievement and less purely reflective of pitching excellence alone.

Further complicating the no hitter vs a perfect game evaluation, perfect games exhibit heightened vulnerability to umpiring subjectivity. A single borderline ball-strike call in a critical count can transform a would-be walk into a strikeout that preserves perfection, or vice versa. Historical review of the twenty-four modern perfect games reveals at least six instances where replay analysis would likely overturn crucial calls that preserved the perfect game bid. This no hitter vs a perfect game reality—that perfection sometimes requires favorable adjudication—introduces an element of institutional contingency that sits uncomfortably alongside the achievement’s rhetoric of absolute flawlessness. The perfect game remains the sport’s pitching pinnacle, yet this pinnacle rests partially upon human judgment rather than objective, reviewable standards alone.

Comparative Advantages In Real-World Scenarios

The no hitter vs a perfect game distinction yields divergent practical implications across multiple real-world scenarios, from pitcher development pathways to Hall of Fame candidacy evaluation to in-game strategic decision-making. For a developing pitcher, the no hitter vs a perfect game framework offers different aspirational targets. The no-hitter represents an achievable pinnacle that rewards power pitchers with high strikeout rates and ground-ball specialists alike; the perfect game, by contrast, disproportionately favors command artists who limit walks and induce weak contact while maintaining efficiency. This no hitter vs a perfect game distinction shapes how organizations evaluate pitching prospects and what skills they prioritize developing.

In the context of career legacy assessment, the no hitter vs a perfect game calculus operates with particular force. No pitcher in Major League history has thrown multiple perfect games, a statistical reality that underscores the achievement’s extreme rarity. The no hitter vs a perfect game differential means that a pitcher with a single perfect game—think Don Larsen, whose otherwise unremarkable career is immortalized by his 1956 World Series perfect game—may achieve greater historical prominence than pitchers with multiple no-hitters but no perfect game. Randy Johnson’s two no-hitters, including a perfect game, place him in rarefied historical territory precisely because the no hitter vs a perfect game combination demonstrates mastery across both achievement categories.

Strategic implications of the no hitter vs a perfect game distinction emerge most dramatically during actual game situations. A manager whose pitcher carries a no-hitter into the seventh inning faces different considerations than one whose pitcher maintains a perfect game. The no hitter vs a perfect game analysis reveals that managers demonstrate significantly greater willingness to remove a pitcher with a no-hitter intact due to pitch count concerns than they do with a perfect game in progress. This no hitter vs a perfect game asymmetry reflects an intuitive recognition: interrupting a no-hitter, while painful, lacks the historical weight of terminating a perfect game bid. Contemporary examples, including multiple instances of pitchers being removed during no-hitters in recent seasons, confirm that the no hitter vs a perfect game distinction carries real strategic consequences that affect competitive outcomes.

Scientific And Expert Consensus (2026)

Contemporary baseball analytics has refined the no hitter vs a perfect game understanding through advanced metrics that quantify the probabilistic and performance-based distinctions between these achievements. As of 2026, the scientific consensus emerging from organizations including Major League Baseball’s Statcast division, independent research collectives, and university sports analytics programs establishes several evidence-based conclusions regarding the no hitter vs a perfect game comparison. Expected batting average against (xBA) analysis demonstrates that the average no-hitter features an xBA of .146, indicating some degree of fortune beyond pure pitching dominance, while perfect games average an xBA of .112, suggesting more genuine suppression of quality contact.

The no hitter vs a perfect game research consensus also addresses the role of defensive support. Statcast’s Outs Above Average metric reveals that no-hitters require an average of 0.9 defensive runs saved above expectation, while perfect games demand 1.7—nearly double the defensive contribution. This no hitter vs a perfect game differential quantifies what observational analysis has long suggested: perfection requires greater collective excellence. The analytical community has largely coalesced around the view that while both achievements merit celebration, the perfect game represents a categorically distinct accomplishment that transcends the sum of its component parts.

Longitudinal studies examining the no hitter vs a perfect game phenomenon across baseball history have identified concerning trends regarding the future frequency of both achievements. The increasing prevalence of high-velocity pitching and elevated strikeout rates might intuitively suggest more no-hitters and perfect games. However, the no hitter vs a perfect game data reveals a countervailing force: pitch count management and bullpen specialization have reduced the frequency with which starting pitchers remain in games long enough to complete either feat. The no hitter vs a perfect game analysis projects continued decline in complete games generally, with perfect games potentially becoming multi-decade events. This projection carries significant implications for how future generations will understand and value the no hitter vs a perfect game distinction.

Final Synthesis And Verdict

The no hitter vs a perfect game comparison ultimately resists simplistic hierarchical resolution. Both achievements occupy baseball’s highest pitching echelon, yet their relationship is better understood as concentric circles than as adjacent rungs on a ladder. Every perfect game constitutes a no-hitter, but the reverse relationship does not hold. This no hitter vs a perfect game structural reality means that the perfect game subsumes and transcends the no-hitter while simultaneously depending upon its foundational definition. The distinction matters precisely because the distance between them—measured in walks, errors, hit batsmen, and the countless near-misses that convert potential perfection into mere hitless performance—illuminates something essential about excellence itself.

When the no hitter vs a perfect game analysis considers which achievement better reflects pitching mastery in isolation, the answer proves counterintuitive. The no-hitter, by accommodating the inevitable variance of human performance, may actually provide a purer measure of a pitcher’s ability to dominate while navigating imperfection. A pitcher who walks the leadoff batter in the ninth inning of a no-hit bid must then execute under maximum pressure with the tying run aboard—a test of competitive character that the perfect game, by definition, cannot impose. This no hitter vs a perfect game observation suggests that the no-hitter sometimes demands greater psychological resilience at its most critical junctures.

Yet when the no hitter vs a perfect game framework shifts toward historical significance and collective achievement, the perfect game asserts unambiguous primacy. The twenty-four perfect games in modern Major League history constitute a canon of baseball’s most transcendent individual performances, each representing a night when a pitcher achieved something approaching the theoretical limit of the position. The no hitter vs a perfect game verdict, then, is not that one achievement simply outranks the other, but rather that they illuminate different dimensions of excellence—the no-hitter celebrates dominance over opposition, while the perfect game celebrates a rare alignment of individual mastery, collective support, and favorable circumstance that produces something genuinely singular in the sporting experience.

Frequently Asked Questions

Why does a perfect game count as a no-hitter but not vice versa?

A perfect game meets all the criteria for a no-hitter (zero hits allowed over at least nine innings) while adding the additional requirement that no opposing player reaches base by any means. The no hitter vs a perfect game relationship is therefore one of subset and superset: every perfect game qualifies as a no-hitter, but most no-hitters fail to achieve perfection due to walks, hit batsmen, errors, or other baserunners. Major League Baseball officially recognizes both achievements separately while acknowledging this hierarchical relationship.

Has any pitcher thrown multiple perfect games?

No pitcher in Major League Baseball history has thrown more than one perfect game during the modern era (post-1900). This no hitter vs a perfect game statistical reality underscores the perfect game’s extreme rarity relative to the no-hitter, which thirty-six pitchers have achieved multiple times. The closest approximation occurred in the pre-modern era when Lee Richmond and John Montgomery Ward threw perfect games within five days of each other in 1880, though neither pitched another.

Can a pitcher lose a perfect game and still throw a no-hitter?

Yes, this scenario represents one of the most painful manifestations of the no hitter vs a perfect game distinction. A pitcher can lose the perfect game by issuing a walk, hitting a batter, or benefiting from a defensive error, yet preserve the no-hitter by preventing any hits through the remainder of the contest. Numerous pitchers have experienced this specific agony, including Pedro Martinez in 1995 when he lost a perfect game on a hit batter in the tenth inning but completed nine hitless innings before being removed.

What is the rarest achievement: a perfect game or an unassisted triple play?

Comparing rarity across different achievement categories provides context for the no hitter vs a perfect game frequency analysis. Unassisted triple plays have occurred only fifteen times in Major League history, making them slightly rarer than perfect games (twenty-four occurrences). However, the perfect game represents a sustained performance over approximately two and a half hours and one hundred plus pitches, whereas the unassisted triple play occurs within seconds. The no hitter vs a perfect game comparison with other rare baseball achievements highlights how perfection across extended performance represents a distinct category of sporting accomplishment.